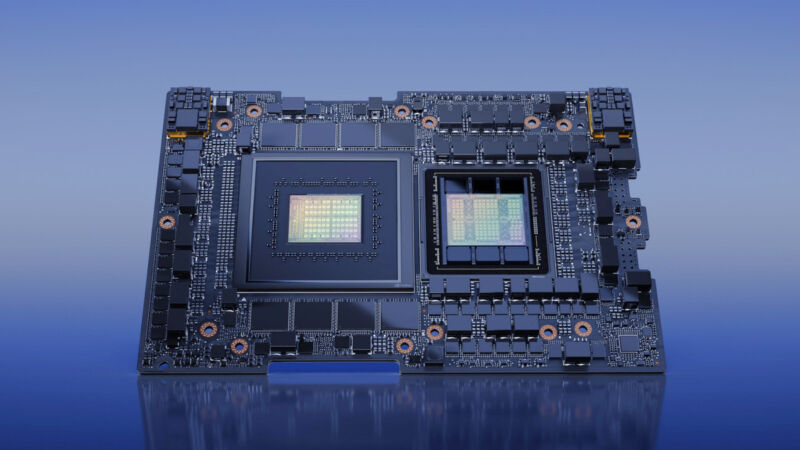

Nvidia’s new monster CPU+GPU chip could energy the following gen of AI chatbots

[ad_1]

Nvidia

Early final week at COMPUTEX, Nvidia introduced that its new GH200 Grace Hopper “Superchip”—a mixture CPU and GPU particularly created for large-scale AI purposes—has entered full manufacturing. It is a beast. It has 528 GPU tensor cores, helps as much as 480GB of CPU RAM and 96GB of GPU RAM, and boasts a GPU reminiscence bandwidth of as much as 4TB per second.

We have beforehand coated the Nvidia H100 Hopper chip, which is presently Nvidia’s strongest knowledge middle GPU. It powers AI fashions like OpenAI’s ChatGPT, and it marked a significant upgrade over 2020’s A100 chip, which powered the primary spherical of coaching runs for most of the news-making generative AI chatbots and picture mills we’re speaking about at the moment.

Quicker GPUs roughly translate into extra highly effective generative AI fashions as a result of they will run extra matrix multiplications in parallel (and do it sooner), which is important for at the moment’s synthetic neural networks to perform.

The GH200 takes that “Hopper” basis and combines it with Nvidia’s “Grace” CPU platform (each named after laptop pioneer Grace Hopper), rolling it into one chip by Nvidia’s NVLink chip-to-chip (C2C) interconnect know-how. Nvidia expects the mix to dramatically speed up AI and machine-learning purposes in each coaching (making a mannequin) and inference (working it).

“Generative AI is quickly remodeling companies, unlocking new alternatives and accelerating discovery in healthcare, finance, enterprise providers and lots of extra industries,” stated Ian Buck, vice chairman of accelerated computing at Nvidia, in a press launch. “With Grace Hopper Superchips in full manufacturing, producers worldwide will quickly present the accelerated infrastructure enterprises wanted to construct and deploy generative AI purposes that leverage their distinctive proprietary knowledge.”

In response to the corporate, key options of the GH200 embrace a brand new 900GB/s coherent (shared) reminiscence interface, which is seven occasions sooner than PCIe Gen5. The GH200 additionally gives 30 occasions greater combination system reminiscence bandwidth to the GPU in comparison with the aforementioned Nvidia DGX A100. Moreover, the GH200 can run all Nvidia software program platforms, together with the Nvidia HPC SDK, Nvidia AI, and Nvidia Omniverse.

Notably, Nvidia additionally announced that will probably be constructing this combo CPU/GPU chip into a brand new supercomputer known as the DGX GH200, which may make the most of the mixed energy of 256 GH200 chips to carry out as a single GPU, offering 1 exaflop of efficiency and 144 terabytes of shared reminiscence, practically 500 occasions extra reminiscence than the previous-generation Nvidia DGX A100.

The DGX GH200 will likely be able to coaching large next-generation AI fashions (GPT-6, anybody?) for generative language purposes, recommender techniques, and knowledge analytics. Nvidia didn’t announce pricing for the GH200, however according to Anandtech, a single DGX GH200 laptop is “simply going to price someplace within the low 8 digits.”

Total, it is affordable to say that because of continued {hardware} developments from distributors like Nvidia and Cerebras, high-end cloud AI fashions will probably proceed to turn into extra succesful over time, processing extra knowledge and doing it a lot sooner than earlier than. Let’s simply hope they do not argue with tech journalists.

[ad_2]

Source