Google’s RT-2 AI mannequin brings us one step nearer to WALL-E

On Friday, Google DeepMind introduced Robotic Transformer 2 (RT-2), a “first-of-its-kind” vision-language-action (VLA) mannequin that makes use of information scraped from the Internet to allow higher robotic management by way of plain language instructions. The last word objective is to create general-purpose robots that may navigate human environments, much like fictional robots like WALL-E or C-3PO.

When a human needs to be taught a activity, we regularly learn and observe. In an analogous approach, RT-2 makes use of a big language mannequin (the tech behind ChatGPT) that has been educated on textual content and pictures discovered on-line. RT-2 makes use of this info to acknowledge patterns and carry out actions even when the robotic hasn’t been particularly educated to do these duties—an idea known as generalization.

For instance, Google says that RT-2 can permit a robotic to acknowledge and throw away trash with out having been particularly educated to take action. It makes use of its understanding of what trash is and the way it’s often disposed to information its actions. RT-2 even sees discarded meals packaging or banana peels as trash, regardless of the potential ambiguity.

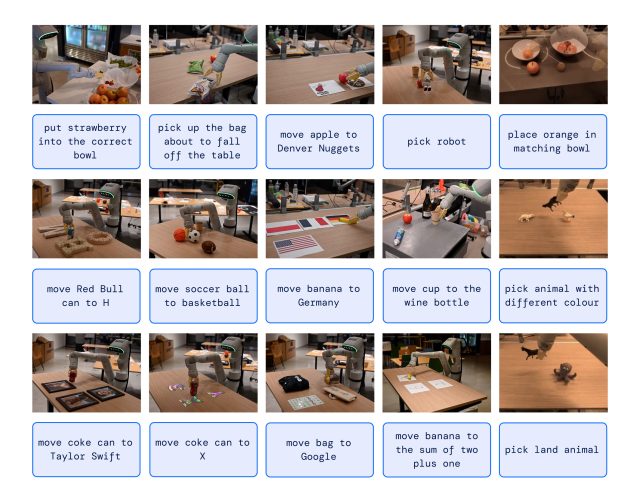

In one other instance, The New York Times recounts a Google engineer giving the command, “Choose up the extinct animal,” and the RT-2 robotic locates and picks out a dinosaur from a number of three collectible figurines on a desk.

This functionality is notable as a result of robots have usually been educated from an enormous variety of manually acquired information factors, making that course of tough because of the excessive time and value of masking each doable state of affairs. Put merely, the actual world is a dynamic mess, with altering conditions and configurations of objects. A sensible robotic helper wants to have the ability to adapt on the fly in methods which can be unattainable to explicitly program, and that is the place RT-2 is available in.

Greater than meets the attention

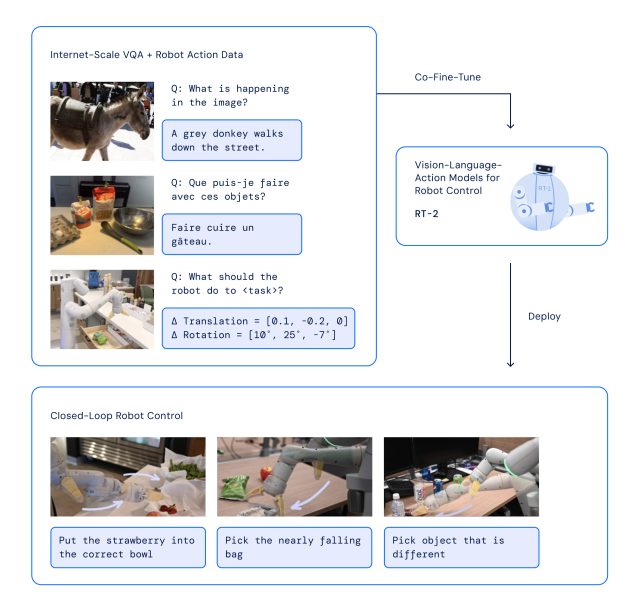

With RT-2, Google DeepMind has adopted a method that performs on the strengths of transformer AI models, identified for his or her capability to generalize info. RT-2 attracts on earlier AI work at Google, together with the Pathways Language and Picture mannequin (PaLI-X) and the Pathways Language mannequin Embodied (PaLM-E). Moreover, RT-2 was additionally co-trained on information from its predecessor mannequin (RT-1), which was collected over a interval of 17 months in an “workplace kitchen atmosphere” by 13 robots.

The RT-2 structure entails fine-tuning a pre-trained VLM mannequin on robotics and net information. The ensuing mannequin processes robotic digicam photos and predicts actions that the robotic ought to execute.

Since RT-2 makes use of a language mannequin to course of info, Google selected to symbolize actions as tokens, that are historically fragments of a phrase. “To manage a robotic, it have to be educated to output actions,” Google writes. “We tackle this problem by representing actions as tokens within the mannequin’s output—much like language tokens—and describe actions as strings that may be processed by customary pure language tokenizers.”

In growing RT-2, researchers used the identical technique of breaking down robotic actions into smaller elements as they did with the primary model of the robotic, RT-1. They discovered that by turning these actions right into a sequence of symbols or codes (a “string” illustration), they might educate the robotic new expertise utilizing the identical studying fashions they use for processing net information.

The mannequin additionally makes use of chain-of-thought reasoning, enabling it to carry out multi-stage reasoning like selecting another device (a rock as an improvised hammer) or choosing the perfect drink for a drained particular person (an vitality drink).

Google says that in over 6,000 trials, RT-2 was discovered to carry out in addition to its predecessor, RT-1, on duties that it was educated for, known as “seen” duties. Nevertheless, when examined with new, “unseen” situations, RT-2 virtually doubled its efficiency to 62 % in comparison with RT-1’s 32 %.

Though RT-2 exhibits an excellent capacity to adapt what it has discovered to new conditions, Google acknowledges that it is not good. Within the “Limitations” part of the RT-2 technical paper, the researchers admit that whereas together with web data within the coaching materials “boosts generalization over semantic and visible ideas,” it doesn’t magically give the robotic new talents to carry out bodily motions that it hasn’t already discovered from its predecessor’s robotic coaching information. In different phrases, it could possibly’t carry out actions it hasn’t bodily practiced earlier than, but it surely will get higher at utilizing the actions it already is aware of in new methods.

Whereas Google DeepMind’s final objective is to create general-purpose robots, the corporate is aware of that there’s nonetheless loads of analysis work forward earlier than it will get there. However know-how like RT-2 looks like a robust step in that course.