HBM4 might lastly double reminiscence bandwidth to 2,048-bit

In context: The primary iteration of high-bandwidth reminiscence (HBM) was considerably restricted, solely permitting speeds of as much as 128 GB/s per stack. Nonetheless, there was one main caveat: graphics playing cards that used HBM1 had a cap of 4 GB of reminiscence as a consequence of bodily limitations.

Over time, HBM producers akin to SK Hynix and Samsung improved upon HBM’s shortcomings. The primary replace, HBM2, doubled potential speeds to 256 GB/s per stack and the utmost capacities to eight GB. In 2018, HBM2 acquired a minor replace (HBM2E), which additional elevated capability limits to 24 GB and introduced one other pace improve, finally hitting 460 GB/s per chip at its peak.

When HBM3 rolled out, the pace doubled once more, permitting for a most of 819 GB/s per stack. Much more spectacular, capacities elevated practically threefold, from 24 GB to 64 GB. Like HBM2E, HBM3 noticed one other mid-life improve, HBM3E, which increased the theoretical speeds as much as 1.2 TB/s per stack.

Alongside the best way, HBM slowly bought changed in consumer-grade graphics playing cards by extra reasonably priced GDDR reminiscence. Excessive-bandwidth reminiscence turned a typical in information facilities, with producers of workplace-focused playing cards opting to make use of the a lot sooner interface.

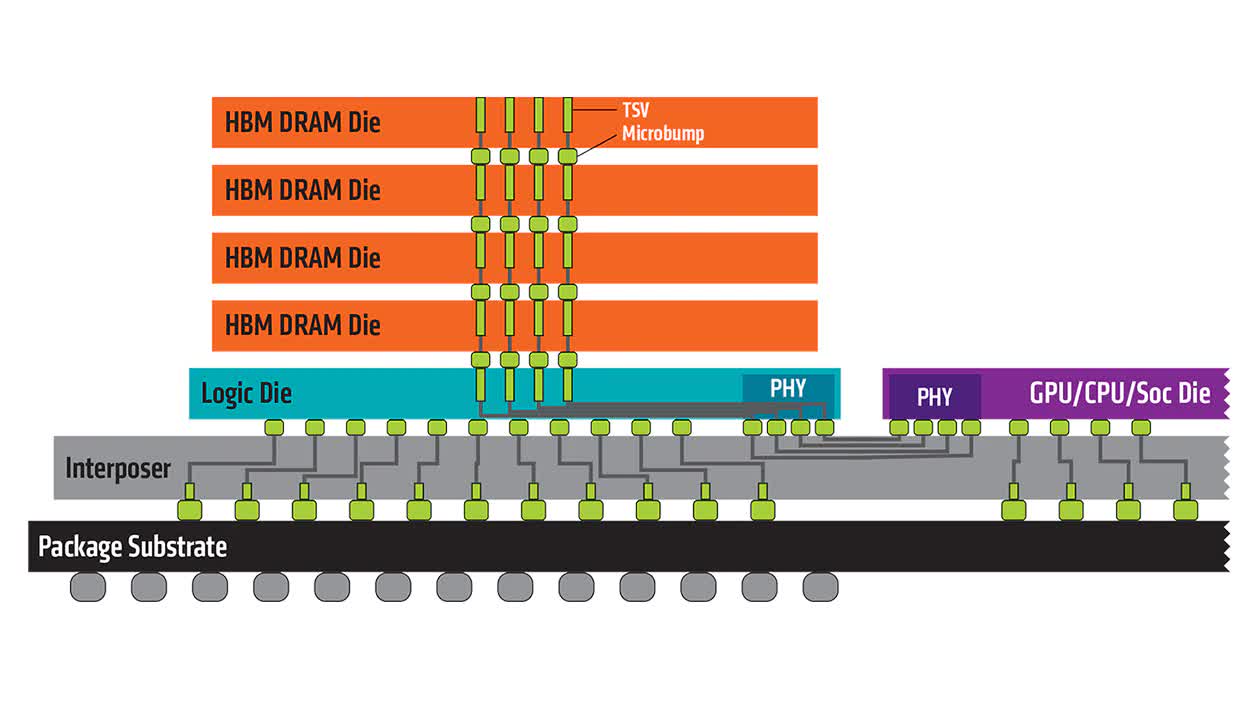

All through the varied updates and enhancements, HBM retained the identical 1,024-bit (per stack) interface in all its iterations. In accordance with a report out of Korea, this may occasionally lastly change when HBM4 reaches the market. If the claims are legitimate, the reminiscence interface will double from 1,024 bits to 2,048 bits.

Leaping to a 2,048 interface might theoretically double switch speeds once more. Sadly, reminiscence producers is likely to be unable to take care of the identical switch charges with HBM4 in comparison with HBM3E. Nonetheless, a better reminiscence interface would permit producers to make use of fewer stacks in a card.

As an illustration, Nvidia’s flagship AI card, the H100, at present makes use of six 1,024-bit recognized good stacked dies, which permits for a 6,144-bit interface. If the reminiscence interface doubled to 2,048-bit, Nvidia might theoretically halve the variety of dies to a few and obtain the identical efficiency. In fact, it’s unclear which path producers will take, as HBM4 is nearly actually years away from being in manufacturing.

At the moment, each SK Hynix and Samsung imagine they are going to have the ability to obtain a “100% yield” with HBM4 once they start to fabricate it. Solely time will inform if the experiences maintain water, so take the information with a grain of salt.