Intel’s “Gaudi 3” AI accelerator chip might give Nvidia’s H100 a run for its cash

On Tuesday, Intel revealed a brand new AI accelerator chip known as Gaudi 3 at its Imaginative and prescient 2024 occasion in Phoenix. With robust claimed efficiency whereas operating giant language fashions (like people who energy ChatGPT), the corporate has positioned Gaudi 3 as an alternative choice to Nvidia’s H100, a well-liked knowledge heart GPU that has been subject to shortages, although apparently that’s easing somewhat.

In comparison with Nvidia’s H100 chip, Intel initiatives a 50 % quicker coaching time on Gaudi 3 for each OpenAI’s GPT-3 175B LLM and the 7-billion parameter model of Meta’s Llama 2. By way of inference (operating the educated mannequin to get outputs), Intel claims that its new AI chip delivers 50 % quicker efficiency than H100 for Llama 2 and Falcon 180B, that are each comparatively common open-weights fashions.

Intel is concentrating on the H100 due to its high market share, however the chip is not Nvidia’s strongest AI accelerator chip within the pipeline. Bulletins of the H200 and the Blackwell B200 have since surpassed the H100 on paper, however neither of these chips is out but (the H200 is expected within the second quarter of 2024—mainly any day now).

In the meantime, the aforementioned H100 provide points have been a significant headache for tech corporations and AI researchers who should fight for access to any chips that may prepare AI fashions. This has led a number of tech corporations like Microsoft, Meta, and OpenAI (rumor has it) to hunt their very own AI-accelerator chip designs, though that customized silicon is usually manufactured by both Intel or TSMC. Google has its personal line of tensor processing items (TPUs) that it has been utilizing internally since 2015.

Given these points, Intel’s Gaudi 3 could also be a probably enticing various to the H100 if Intel can hit a great value (which Intel has not offered, however an H100 reportedly prices round $30,000–$40,000) and preserve satisfactory manufacturing. AMD additionally manufactures a aggressive vary of AI chips, such because the AMD Instinct MI300 Series, that promote for around $10,000–$15,000.

Gaudi 3 efficiency

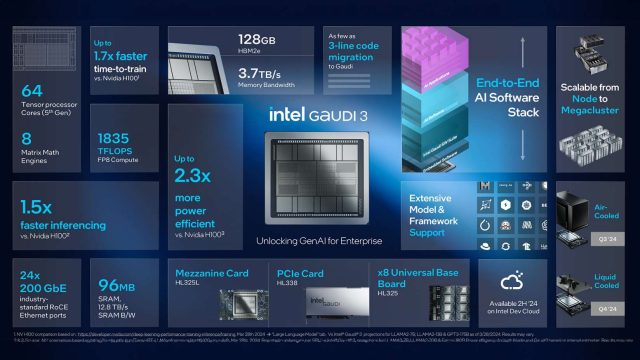

Intel says the brand new chip builds upon the structure of its predecessor, Gaudi 2, by that includes two similar silicon dies related by a high-bandwidth connection. Every die comprises a central cache reminiscence of 48 megabytes, surrounded by 4 matrix multiplication engines and 32 programmable tensor processor cores, bringing the entire cores to 64.

The chipmaking large claims that Gaudi 3 delivers double the AI compute efficiency of Gaudi 2 utilizing 8-bit floating-point infrastructure, which has develop into essential for coaching transformer fashions. The chip additionally affords a fourfold increase for computations utilizing the BFloat 16-number format. Gaudi 3 additionally options 128GB of the inexpensive HBMe2 memory capability (which can contribute to cost competitiveness) and options 3.7TB of reminiscence bandwidth.

Since knowledge facilities are well-known to be power hungry, Intel emphasizes the ability effectivity of Gaudi 3, claiming 40 % higher inference power-efficiency throughout Llama 7B and 70B parameters, and Falcon 180B parameter fashions in comparison with Nvidia’s H100. Eitan Medina, chief working officer of Intel’s Habana Labs, attributes this benefit to Gaudi’s large-matrix math engines, which he claims require considerably much less reminiscence bandwidth in comparison with different architectures.

Gaudi vs. Blackwell

Final month, we covered the splashy launch of Nvidia’s Blackwell structure, together with the B200 GPU, which Nvidia claims would be the world’s strongest AI chip. It appears pure, then, to match what we learn about Nvidia’s highest-performing AI chip to the very best of what Intel can at present produce.

For starters, Gaudi 3 is being manufactured utilizing TSMC’s N5 process know-how, in keeping with IEEE Spectrum, narrowing the hole between Intel and Nvidia by way of semiconductor fabrication know-how. The upcoming Nvidia Blackwell chip will use a custom N4P process, which reportedly affords modest efficiency and effectivity enhancements over N5.

Gaudi 3’s use of HBM2e reminiscence (as we talked about above) is notable in comparison with the dearer HBM3 or HBM3e utilized in competing chips, providing a stability of efficiency and cost-efficiency. This selection appears to emphasise Intel’s technique to compete not solely on efficiency but in addition on value.

So far as uncooked efficiency comparisons between Gaudi 3 and the B200, that may’t be identified till the chips have been launched and benchmarked by a 3rd occasion.

Because the race to energy the tech trade’s thirst for AI computation heats up, IEEE Spectrum notes that the subsequent technology of Intel’s Gaudi chip, code-named Falcon Shores, stays a focal point. It additionally stays to be seen whether or not Intel will proceed to depend on TSMC’s know-how or leverage its personal foundry enterprise and upcoming nanosheet transistor technology to realize a aggressive edge within the AI accelerator market.