AMD admits its Intuition MI300X AI accelerator nonetheless cannot fairly beat Nvidia’s H100 Hopper

[ad_1]

In context: The primary official efficiency benchmarks for AMD’s Intuition MI300X accelerator designed for knowledge middle and AI functions have surfaced. In comparison with Nvidia’s Hopper, the brand new chip secured combined ends in MLPerf Inference v4.1, an industry-standard benchmarking device for AI programs with workloads designed to judge AI accelerator coaching and inference efficiency.

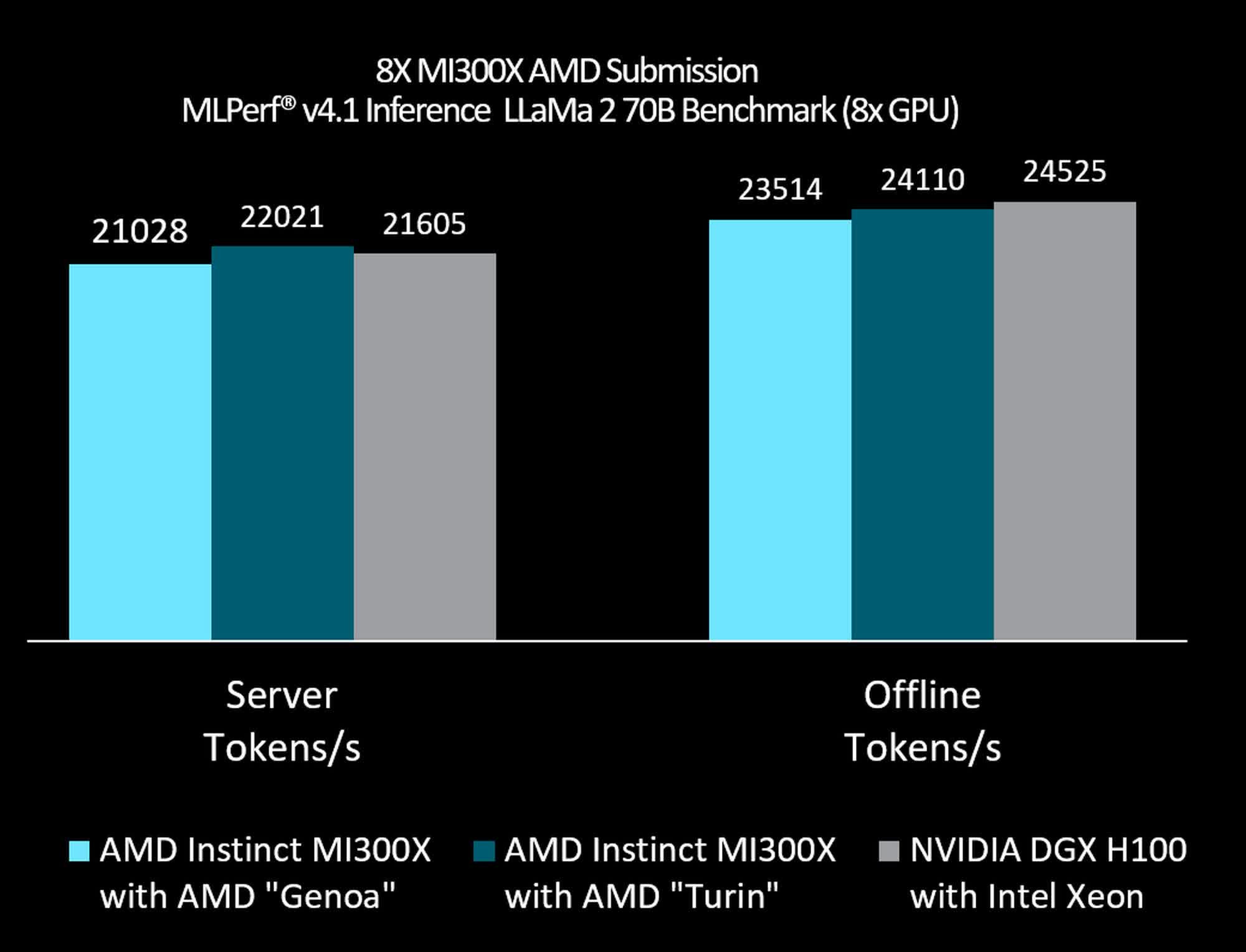

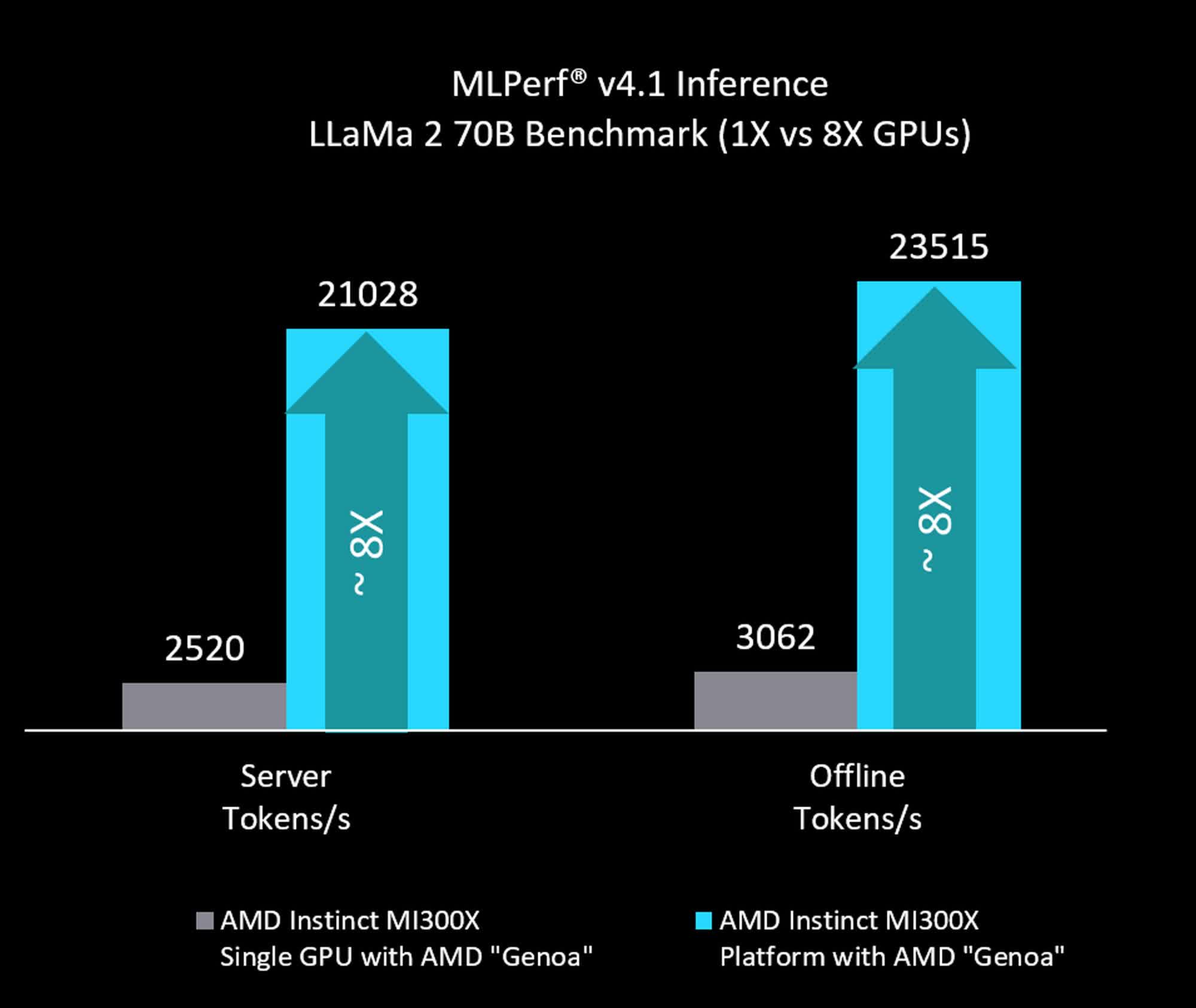

On Wednesday, AMD launched benchmarks comparing the efficiency of its MI300X with Nvidia’s H100 GPU to showcase its Gen AI inference capabilities. For the LLama2-70B mannequin, a system with eight Intuition MI300X processors reached a throughput of 21,028 tokens per second in server mode and 23,514 tokens per second in offline mode when paired with an EPYC Genoa CPU. The numbers are barely decrease than these achieved by eight Nvidia H100 accelerators, which hit 21,605 tokens per second in server mode and 24,525 tokens per second in offline mode when paired with an unspecified Intel Xeon processor.

When examined with an EPYC Turin processor, the MI300X fared a bit of higher, reaching a throughput of twenty-two,021 tokens per second in server mode, barely larger than the H100’s rating. Nonetheless, in offline mode, the MI300X nonetheless scored decrease than the H100 system, reaching solely 24,110 tokens per second.

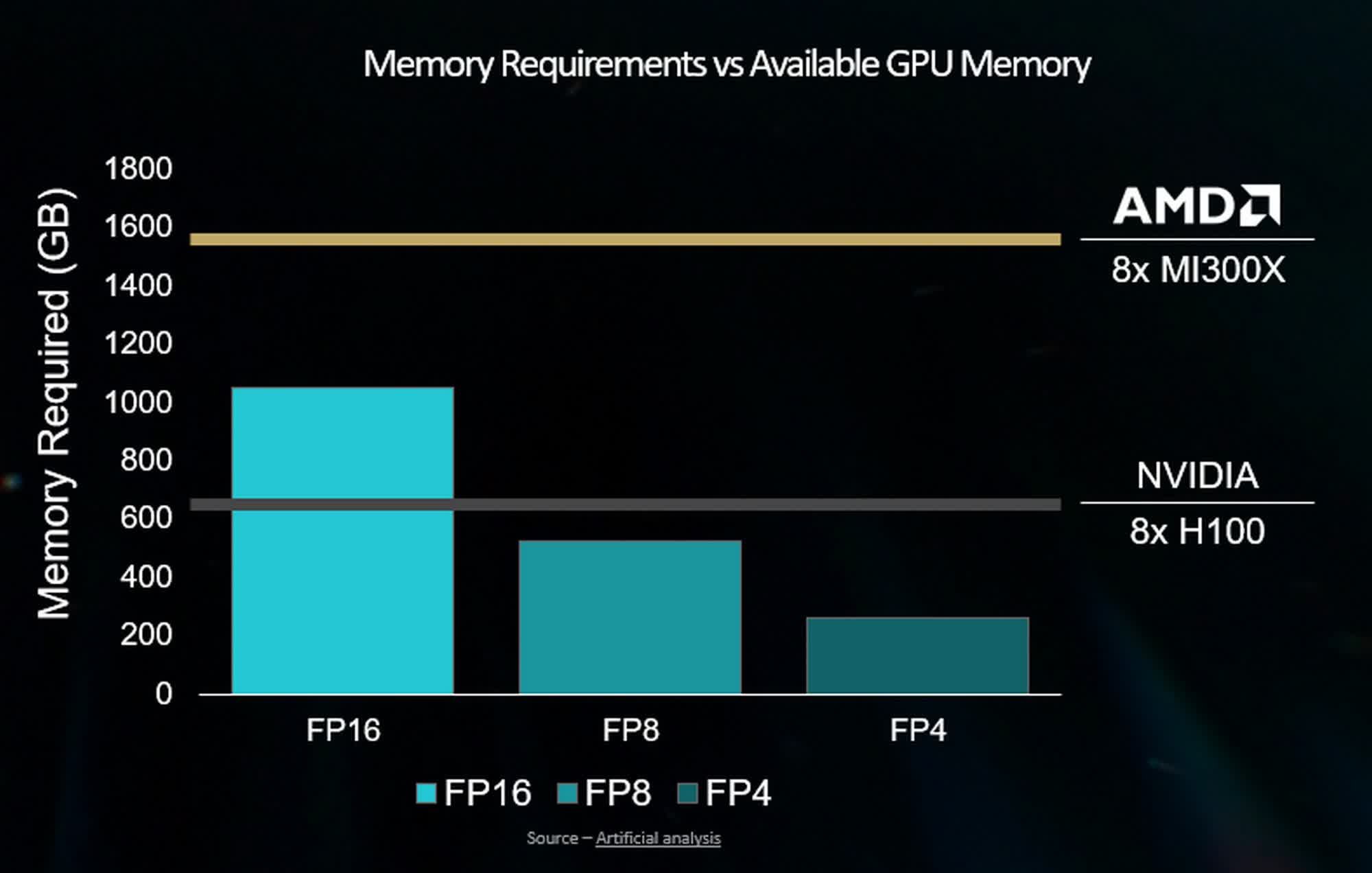

The MI300X helps larger reminiscence capability than the H100, doubtlessly permitting it to run a 70 billion parameter mannequin just like the LLaMA2-70B on a single GPU, thereby avoiding the community overhead related to mannequin splitting throughout a number of GPUs at FP8 precision. For reference, every occasion of the Intuition MI300X options 192 GB of HBM3 reminiscence and delivers a peak reminiscence bandwidth of 5.3 TB/s. As compared, the Nvidia H100 helps as much as 80GB of HMB3 reminiscence with as much as 3.35 TB/s of GPU bandwidth.

The outcomes largely align with Intel’s latest claims that its Blackwell and Hopper chips provide large efficiency beneficial properties over competing options, together with the AMD Intuition MI300X. Likewise, Nvidia supplied knowledge exhibiting that in LLama2 exams, a system with eight MI300X processors reached solely 23,515 tokens per second at 750 watts in offline mode. In the meantime, the H100 achieved 24,525 tokens per second at 700 watts. The numbers for server mode are comparable, with the MI300X hitting 21,028 tokens per second, whereas the H100 scored 21,606 tokes per second at decrease wattage.

[ad_2]

Source